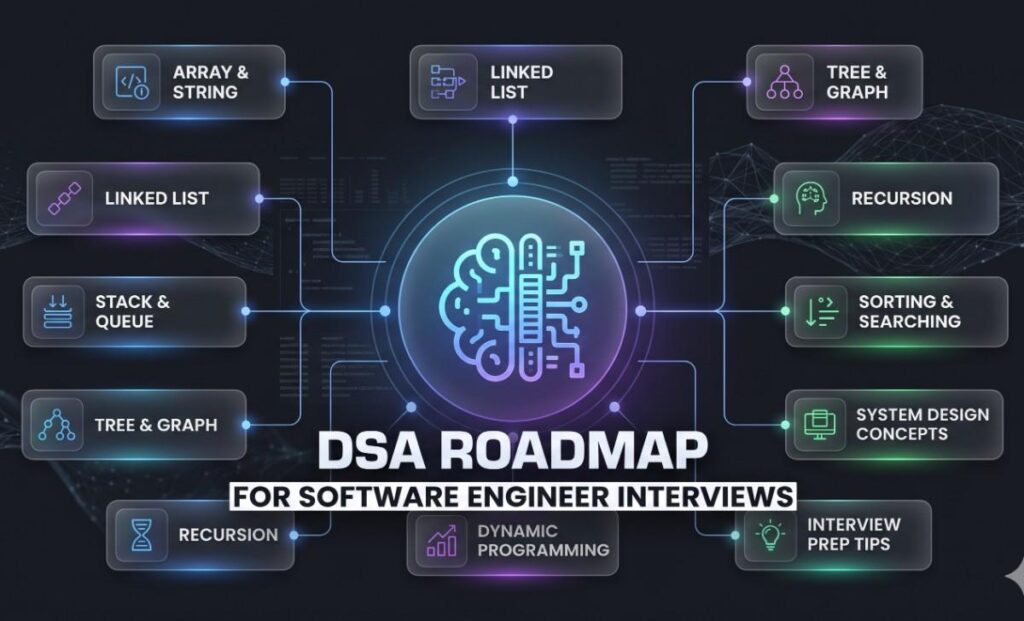

What Are You Missing in Your DSA Preparation?

Picture this: you’ve been grinding LeetCode for three months. You’ve solved over 200 problems. But when the Google phone screen starts, you freeze on a graph traversal question you’ve seen a dozen times — because you studied topics randomly, not strategically.

That experience is more common than it should be. The DSA roadmap for interviews is not just a topic list — it’s a sequenced learning architecture. The order in which you tackle concepts matters enormously, because advanced topics like dynamic programming assume fluency in recursion, which itself assumes comfort with arrays and recursion trees.

This guide strips away the noise. You’ll get a phased, dependency-aware sequence of exactly what to study, why each topic earns its place, and which real interview patterns each concept unlocks. This guide gives you a complete DSA roadmap for software engineer interviews, structured for real interview success.

🎯Who this is for:

Mid-level software engineers targeting FAANG, MAANG, or tier-1 tech roles who want a disciplined, ordered preparation strategy — not just a random problem list.

Table of Contents

DSA Roadmap: The Five-Phase Plan

This DSA roadmap for software engineer interviews is built around dependencies. You cannot reason about graph BFS until you understand queues. You cannot reason about memorization until you’ve struggled through recursive backtracking first. The five phases below encode those dependencies deliberately.

-

1. Core Data Structures Weeks 1–2 · Foundation

Arrays

Strings

Hash Maps

Two Pointers

Sliding Window

Everything else is built on top of these. Arrays are how memory works. Hash maps eliminate the O(n) inner loops that disqualify brute-force solutions.

-

2. Recursion, Stacks, Queues & Linked Lists Weeks 3–4 · Building blocks of traversal

Recursion

Stacks

Queues

Recursion is the mental model behind trees, graphs, and DP. Stacks implement recursion iteratively. Getting comfortable here is prerequisite to Phases 3–5.

-

3. Trees, Heaps & Binary Search Weeks 5–6 · Core interview fodder

BST

DFS/BFS

Heaps

Tree problems are the most common FAANG question category. Heaps unlock the top-K pattern. Binary search on abstract spaces (not just arrays) shows up constantly.

-

4. Graphs & Advanced Traversal Weeks 7–8 · Scale & complexity

Graph BFS/DFS

Union-Find

Topological Sort

Graph problems test your ability to model real-world problems (networks, dependencies, state machines). Union-Find alone covers a surprising number of “connected components” questions.

-

5. Dynamic Programming Weeks 9–12 · Advanced — earn your place here

1D DP

2D DP

Knapsack

DP separates mid-level candidates from senior-level ones. It’s not about memorising solutions — it’s about recognising overlapping subproblems. Attempting this without Phases 1–4 is why most people struggle.

Arrays, Strings, and Hashing — Why You Start Here

The compulsion to skip ahead is real. Arrays feel basic. But virtually every interview problem operates on arrays in some capacity, and the patterns you learn here — two pointers, sliding window, prefix sums — reappear throughout the entire roadmap.

The Two-Pointer Pattern

Two pointers is not about using two variables — it’s about reducing an O(n²) problem to O(n) by maintaining invariants as both ends of an array converge. Consider the classic “container with most water” problem: a naïve approach checks every pair of lines. Two pointers works because once you identify the shorter of the two walls, no pair involving that wall can do better, so you can safely advance past it.

This leap — from checking everything to eliminating entire search spaces based on a maintained invariant — is the conceptual kernel that powers binary search, merge sort, and a significant portion of graph problems. Learn it deeply here, not just mechanically. You can refer GeeksForGeeks.

Hash Maps: The O(1) Trade-off Engine

Hash maps are the single most useful tool in interview prep. Their function is simple: convert a O(n) lookup into an O(1) lookup at the cost of O(n) extra space. Two-Sum is boring; what’s interesting is realising that the same logic unlocks longest substring without repeating characters, grouping anagrams, and finding subarrays with a given sum.

Think of a hash map less as a data structure and more as a running memory — a way to ask “have I seen this condition before?” in constant time. Spotify’s recommendation engine, for instance, fundamentally relies on the same pattern: maintaining a fast-lookup cache of previously computed similarity scores to avoid recomputing from scratch. You can read more about Hash Maps on freecodecamp.

💡Pause and reflect:

Can you derive the sliding window solution for “minimum window substring” from first principles, without looking at notes? That ability — not problem count — is what interviews test.

Recursion, Stacks, and Linked Lists — The Mechanics of Thinking Recursively

Many candidates treat linked list problems as isolated exercises. They’re not. Linked lists are a training ground for pointer manipulation and multi-variable state — the same cognitive skills you need to write correct recursive tree traversals.

Recursion: Learning to Trust the Function

The hardest conceptual shift in this entire roadmap is learning to write a recursive function correctly without tracing every call mentally. The technique is to assume your function already works for the smaller case and ask: what do I need to do at the current level, given that everything below me is handled?

Think of it like a chef coordinating a kitchen team: the head chef doesn’t prepare every dish personally — they trust that each station delivers their part correctly, and they do only the final assembly. The return statement at the base case is the delivery; every other level is assembly. Practicing this mental model on simple problems (reverse a linked list recursively, flatten a nested list) before hitting trees pays enormous dividends. You can refer to this free resource on freecodecamp.

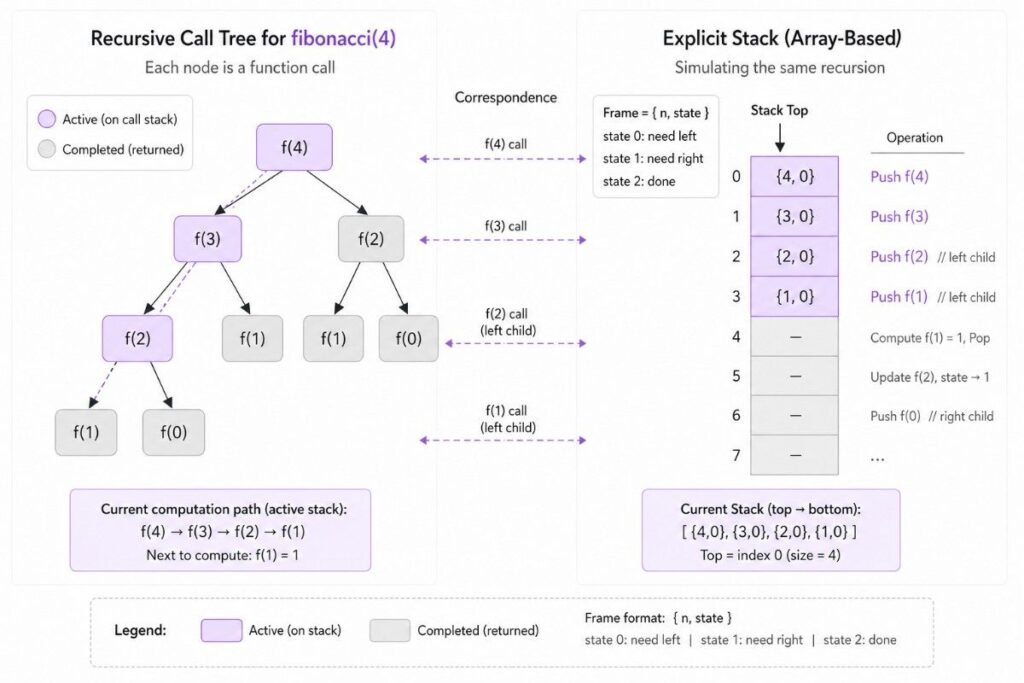

The Stack — Recursion Made Explicit

Every recursive call creates a stack frame. Understanding this lets you convert any recursive solution into an iterative one — a skill that interviewers sometimes ask for explicitly, and that always impresses when volunteered. The classic example is iterative in-order tree traversal: once you see it as manually managing the call stack with a while loop and explicit stack, the pattern snaps into place for dozens of related problems.

Monotonic stacks — where you maintain a stack of elements in ascending or descending order — deserve special attention. They power “next greater element,” “largest rectangle in histogram,” and “daily temperatures” — a pattern family that appears far more often in senior-level interviews than most guides acknowledge.

Trees, Heaps, and Binary Search — The Heart of FAANG Interviews

If there is one category that defines the FAANG coding round, it is trees. Binary trees, binary search trees, n-ary trees, tries — and the traversal patterns that connect them. Combined with heap-based problems and the underrated power of binary search on answer spaces, this phase is where most of your interview value lies.

Tree Traversal as a Mental Model

DFS pre-order, in-order, and post-order traversals are not separate things to memorise — they are variations of a single recursive structure that differ only in when you process the current node relative to its children. Getting deeply comfortable with this single observation eliminates entire categories of confusion.

Post-order traversal, in particular, is the engine behind problems where you need to compute something about a subtree before the parent node can know its own value — diameter of binary tree, maximum path sum, check if tree is balanced. These are genuinely hard problems for candidates who haven’t internalised post-order semantics.

Binary Search on Answer Spaces

Most candidates apply binary search only to sorted arrays. Senior candidates apply it to anything with a monotonic condition — which is a vast category. “Minimum days to make m bouquets,” “koko eating bananas,” “split array largest sum” — none of these mention a sorted array, yet all are binary search problems. The key insight: if the answer can be framed as “find the smallest value of X such that condition(X) is true,” and condition is monotonic, binary search applies.

This pattern is a genuine differentiator. A candidate who spots a binary-search-on-answer solution in a problem that looks like a simulation problem will consistently impress interviewers, because it signals they’re thinking about problem structure, not surface features.

“The candidate who solves a simulation problem with binary search isn’t lucky — they’ve trained themselves to see monotonic structure everywhere.”

Heaps and the Top-K Pattern

Heaps have one job: give you the current minimum or maximum in O(log n). But that one job unlocks a surprisingly rich pattern space. Any problem asking for the k-th largest element, a running median, or the k closest points is a heap problem. The insight is that you often don’t need the full sorted order — only the boundary between the top-k and the rest.

Merge k sorted lists — a canonical interview problem at Google and Meta — is essentially asking you to efficiently maintain that boundary across k simultaneous streams.

Graphs — Modelling the Real World

Graph problems intimidate candidates who perceive them as specialised. They are not. A graph is just a collection of relationships, and a staggering number of real problems reduce to graphs once you see them correctly: course prerequisites are a DAG, islands in a grid are a graph, network delays are a weighted graph, word ladders are an unweighted BFS.

The Three Templates That Cover 90% of Graph Problems

Rather than treating every graph problem as novel, study three templates deeply: BFS for shortest paths in unweighted graphs, DFS with a visited set for connected components and cycle detection, and Union-Find for dynamic connectivity queries. Each template has a single clean implementation — and once you’ve internalised them, you spend interview time reasoning about the problem model rather than the traversal mechanics.

Topological sort — Kahn’s algorithm in particular — extends BFS to handle dependency ordering. It shows up in build systems, package managers (npm resolves dependency graphs this way), and course scheduling problems. Understanding why it works (it processes nodes only when all their prerequisites have been handled) is more important than memorising the code.

🔗Modelling insight:

Before coding any graph problem, spend 60 seconds explicitly stating:

“The nodes are ___. The edges are ___. I’m looking for ___.” Candidates who skip this step frequently solve the wrong problem entirely.

Thought experiment: How would you model a job scheduler (where some jobs must finish before others can start) as a graph? What traversal algorithm would detect a deadlock in that system?

Dynamic Programming — Why Most Explanations Fail You

DP has a reputation for being impenetrable. Most of that reputation comes from learning it the wrong way: memorising recurrence relations for specific named problems rather than understanding the underlying structure they all share. Once you see that structure, DP becomes pattern recognition, not rote memorisation.

The Two Questions That Define Every DP Problem

Every DP solution requires answering exactly two questions before writing any code. First: what is the subproblem? Define it precisely — “the maximum profit using the first i items with capacity j,” not “the answer for smaller inputs.” Second: what is the recurrence? How does the answer to the current subproblem depend on smaller subproblems?

The reason DP problems feel arbitrary is that candidates skip question one. They jump directly to the recurrence, fail to make it precise, and end up with an implementation that almost works. Netflix’s content recommendation system, at a conceptual level, solves a variant of the weighted interval scheduling problem — which is itself a 1D DP problem where each subproblem asks “what is the best set of shows to recommend ending at time t?” Defining the subproblem precisely is what makes the whole structure click.

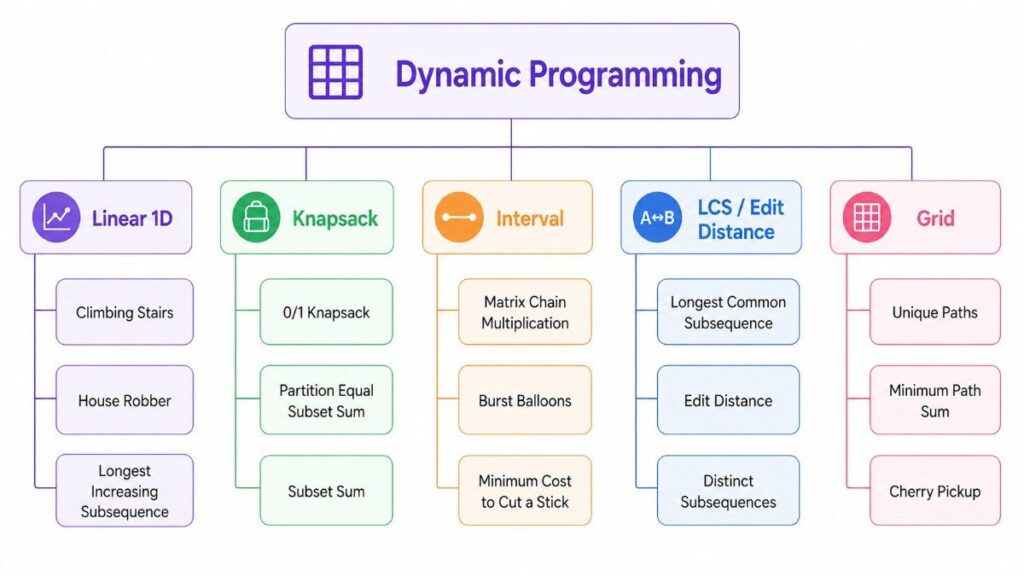

The Five DP Pattern Families Worth Mastering

Rather than learning 50 distinct problems, learn five pattern families. Linear sequences (climbing stairs, house robber, longest increasing subsequence) share the same backbone — each position depends on a small window of previous positions. Knapsack variants (0/1 knapsack, coin change, partition equal subset sum) all reduce to a 2D table where one axis is items and one is capacity.

Interval DP (matrix chain multiplication, burst balloons) works on contiguous subarrays, where the subproblem is always “what’s the optimal cost for this interval?” LCS/edit-distance problems form their own family, characterised by comparing two sequences character by character. And grid DP (unique paths, minimum path sum) trivially extends 1D DP to two dimensions.

Platforms like Gururo are structured to reinforce exactly these families in sequence, which is important: practicing knapsack variants before you have solid 1D DP intuition leads to confusion, not understanding.

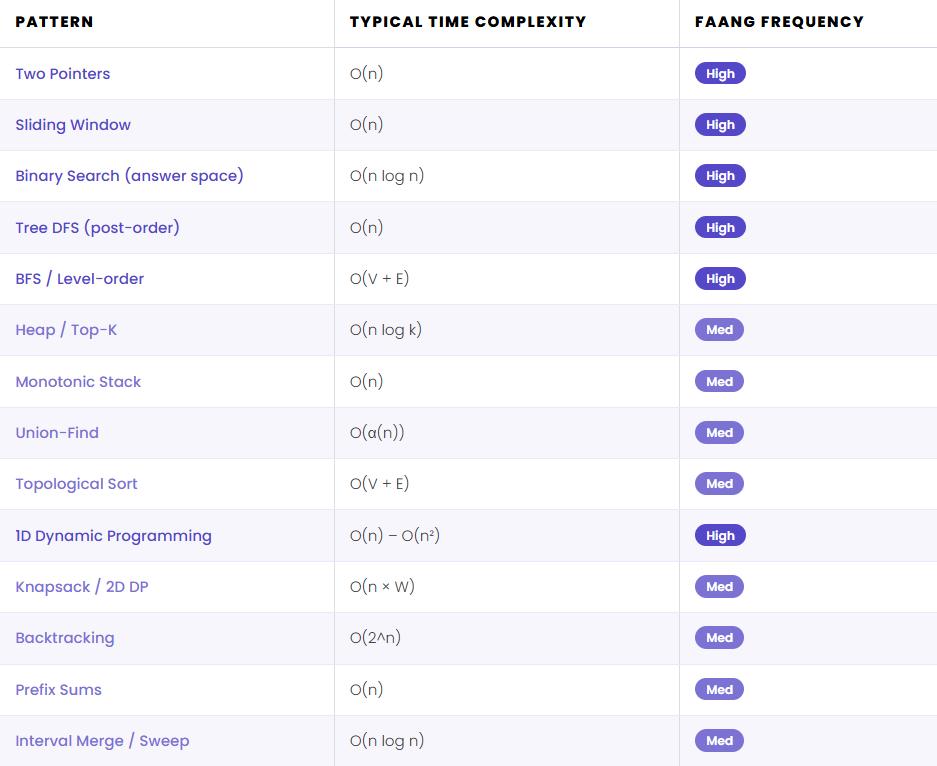

The 14 Patterns That Unlock Most FAANG Problems

These patterns are the vocabulary of DSA interviews. Each problem is an instance of one or two of them — and recognising the pattern in the first 2–3 minutes of a problem is what separates smooth interviews from halting ones.

Frequently Asked Questions

How many LeetCode problems do I actually need to solve?

Quality beats quantity. 150–200 problems solved with deep understanding — being able to explain the pattern, code it cleanly, and identify variations — outperforms 400+ problems where you relied on solution hints. Focus on mastering the 14 pattern families; the problems are vehicles for internalising those patterns, not the goal themselves.

Should I learn dynamic programming before graphs?

No — and the order matters significantly. Graph traversal (BFS, DFS) builds the recursive intuition that DP requires. Attempting DP without fluent recursion and a solid understanding of state spaces leads most candidates to memorise recurrences rather than derive them. Complete Phases 3 and 4 first.

What is the DSA roadmap for cracking FAANG interviews?

A phased, dependency-aware sequence: begin with arrays, strings, and hash maps; progress through recursion and linked lists; master trees, binary search, and heaps; tackle graph algorithms; and finally approach dynamic programming. The key differentiator is studying topics in this exact order — later topics assume fluency in earlier ones.

Is dynamic programming always asked in FAANG interviews?

Not always, but frequently at senior levels and virtually always at L5+ at Google, Meta, and Amazon. More importantly, DP problems are often used as distinguishing questions — they’re given to candidates who’ve already cleared the basic rounds. Not being prepared for DP is a risk at any level targeting tier-1 roles.

How long should the DSA interview preparation take?

For someone with solid programming fundamentals but limited DSA exposure: 8–12 weeks at ~2 hours per day. For someone refreshing existing knowledge: 4–6 weeks. The ceiling is not time invested — it’s whether you’ve genuinely internalised each pattern before moving to the next phase.

The Roadmap Is a Means, Not an End

The most common mistake in interview preparation is treating problem-solving as an inventory exercise — a checklist of topics to tick off. This roadmap is designed to do the opposite: to build a small number of deep mental models that transfer across hundreds of problems.

Arrays and hash maps teach you to trade space for time. Recursion teaches you to trust decomposition. Trees teach you to process structure bottom-up. Graphs teach you to model relationships. Dynamic programming teaches you to build answers incrementally from decisions you’ve already evaluated. These are not interview tricks — they are fundamental ways of reasoning about computation.

The candidates who stand out in FAANG coding interviews are not the ones who solved the most problems. They are the ones who can, in the first two minutes of an unfamiliar problem, say with confidence: “This looks like a binary search on the answer space — let me define the condition function.”

Join the discussion: Which of the five phases do you find most challenging — and why? Share in the comments. If you’re between phases, describe the specific problem where you felt the prerequisite gap most acutely. Your experience might be the insight that helps the next engineer on the same path.