Imagine you’re a delivery driver zipping through city streets, heart racing as orders flood in during peak dinner rush. Behind the scenes, algorithms powered by DoorDash’s Ensemble Learning Time Series are predicting that surge with pinpoint precision—ensuring hot meals arrive on time, drivers stay balanced, and customers keep coming back.

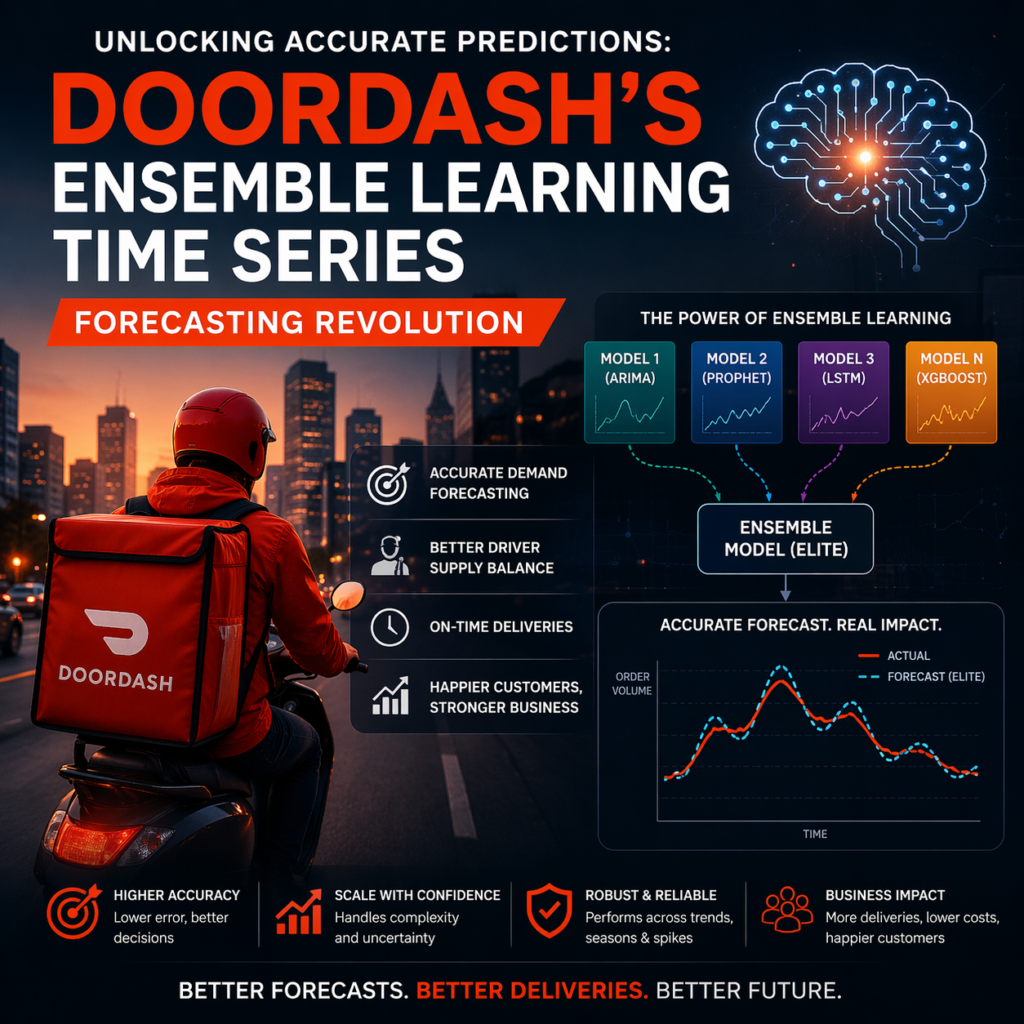

That’s the magic of smart forecasting in action. But what if I told you that getting it right isn’t about one super-smart model? It’s about a team of specialists working together, much like a band jamming to create a hit song. At DoorDash, they cracked this code with an innovative ensemble learning time series forecasting system called ELITE. In this deep dive, we’ll unpack their journey, from gritty challenges to game-changing wins, and how you can apply these predictive modeling ensemble methods to your own data puzzles.

Whether you’re a data scientist wrestling with volatile sales trends or a business leader eyeing demand spikes, this guide is your roadmap. We’ll blend DoorDash’s real-world story with actionable tips, backed by stats and trends, to help you build robust time series forecasting models that don’t just predict—they perform.

What Is Ensemble Learning in Time Series Forecasting?

You’re forecasting weekend crowds at your favorite coffee shop. A simple trend line might miss the Friday hype from social media buzz, while a fancy neural network could overthink the quiet Sundays. Enter ensemble learning time series forecasting—a powerhouse approach where multiple models pool their strengths to deliver sharper, more reliable predictions.

At its core, ensemble learning for forecasting combines outputs from diverse “base” models—like statistical ones from statsmodels or tree-based boosters from LightGBM—into a unified forecast. Think of it as a roundtable discussion: Each expert chimes in, and a meta-model (the “stacker”) weighs their input to form the final call. This isn’t just theory; research from the Journal of Machine Learning Research shows ensemble methods can outperform Bayesian averaging by capturing biases each model misses alone.

Why does this matter for time series prediction algorithms? Time series data—think stock prices, website traffic, or DoorDash orders—dances with trends, seasons, and sudden jolts like holidays or weather whiplash. A single model might nail the seasonal swing but flop on outliers. Ensembles smooth those edges, boosting accuracy by up to 10-15% in volatile scenarios, per McKinsey’s AI-driven operations insights.

In today’s fast-paced world, where global e-commerce sales are projected to hit $6.5 trillion by 2023 (Statista), nailing these predictions isn’t optional—it’s survival. DoorDash’s ELITE model exemplifies this, turning raw data into forecasts that power everything from driver scheduling to inventory magic.

The Hidden Challenges in Time Series ML: Why Single Models Fall Short

Let’s get real: Forecasting isn’t a straight shot down a predictable highway. It’s more like navigating rush-hour traffic with blind spots everywhere. Time series ML challenges pop up fast—unrealistic assumptions, biased strengths, and downright instability.

Take assumptions: Many models assume clean, single-seasonality patterns, but real life throws curveballs like weekly cycles layered over yearly ones. A study in Forecasting: Principles and Practice highlights how multiple seasonality can trip up even advanced deep learning architectures, leading to 20-30% error spikes in long-horizon forecasts.

Then there’s bias: Some models shine in stable trends but crumble during black-swan events, like a sudden storm slashing delivery speeds. DoorDash faced this head-on with granular targets tens of thousands of switchback-level delivery time forecasts for experiments. Traditional grid searches? They’d chew through hours and hundreds in cloud costs per run, thanks to exponential config combos

And instability? Solo models spit out wild swings—sharp spikes that spook stakeholders. In production, where 80% of ML models fail to deploy, these glitches amplify risks. DoorDash’s forecasting team knew the drill: They needed ensemble model accuracy improvement without the bloat.

Current trends echo this pain. Gartner predicts 75% of enterprises will shift to hybrid AI by 2025, blending ensembles for robustness amid data explosions from IoT and e-commerce. But ignoring these hurdles? You’re leaving money on the table—poor forecasts cost retailers up to 10% in lost revenue annually.

DoorDash's Breakthrough: How They Implemented Ensemble Learning Time Series Forecasting

DoorDash didn’t just tweak—they reinvented. Their ELITE (Ensemble Learning for Improved Time-series Estimation) framework stacks base models temporally, layering a meta-learner on top to blend predictions. It’s like upgrading from a solo guitarist to a full orchestra, all while keeping the show tight and budget-friendly.

Step-by-Step: Building the Ensemble Model Workflow

Here’s how they pulled it off, drawn straight from their playbook:

Select Base Learners Diversely: DoorDash tapped their Forecast Factory a scalable platform integrating Prophet, LightGBM, and custom tweaks for outliers, holidays, and weather. Diversity is key; mixing statistical and ML models ensures no blind spots.Tip: Aim for 5-10 bases, penalizing complexity to avoid overfitting.

Stack with Temporal K-Fold Cross-Validation: To dodge data leakage, they partitioned historical data into folds, training bases on past chunks and validating forward. This preserves time order, generating stacked features. Pro move: Use rolling windows for realism—DoorDash’s setup cut validation time from hours to minutes.

Fit the Top-Layer Ensemble: With stacked data as input, they fed it into flexible meta-models—from linear regression for speed to neural nets for depth. The result? Weights that amplify strong performers per horizon stage.

This training ensemble models for time series approach slashed runtime by 78% and costs by 95% on Spark clusters, per their benchmarks. On Ray clusters? Even better—50% faster execution, 93% cheaper.

Tech Stack: Distributed Training for Forecasting Magic

Efficiency isn’t accidental. DoorDash layered nested parallelization: Outer loops for targets, inner for bases, all on Ray—a distributed framework that scaled GPT-like training for OpenAI. KubeRay on Kubernetes kept it stable, dodging Spark’s log nightmares.

Their generic wrappers? Game-changers. Plug in any model—custom or off-the-shelf—and it auto-integrates. This modularity means swapping Prophet for a new GluonTS variant? Zero refactor. For you: Start with Ray’s free tier for prototypes; it’s a 6x speedup waiting to happen.

Benefits That Pack a Punch: Why Ensemble Models Improve Time Series Predictions

DoorDash’s ELITE isn’t hype—it’s results. On weekly order volumes across thousands of submarkets, it delivered 12% MAPE accuracy gains over the best single model, all while unblocking high-dimensional jobs like switchback delivery forecasts.

Accuracy Edge: Ensembles average out biases, excelling in diverse horizons. A Towards Data Science deep dive notes stacking can lift performance 10% in real-world volatility. DoorDash saw stable outputs, no more erratic spikes.

Speed & Savings: Ditch exhaustive grids; ELITE trains in minutes, not hours. Their switchback use case? From infeasible to 80 minutes, with 10% variance reduction via CUPED-like covariates.

- Scalability: Handles 5-digit granularities, perfect for e-commerce booms. Trends show ensembles powering 60% of Fortune 500 demand forecasts

- Lower Risk: Fewer retrains mean less maintenance. Diagnostics reveal base contributions, spotlighting weak links.

In short, can ensemble methods outperform single-model forecasting in production? Absolutely—DoorDash proves it, and so do rising adoption rates in retail ML pipelines.

Real-World Forecasting Use Cases: DoorDash's Wins and Lessons

DoorDash’s story shines in action. First up: Weekly order volume for financial planning. ELITE explored thousands of configs per submarket, yielding 83% faster runs and 96% cost drops on Spark—12% better accuracy to boot.

Second: Delivery time forecasts at switchback level for experiments. Grid search timed out; ELITE nailed it in under two hours, boosting power with predicted covariates. Real-world forecasting use cases DoorDash style? They’re now standard for variance reduction.

Case study takeaway: Start small. Pilot on one metric (e.g., sales), scale with Ray. Businesses using ensemble learning for demand or sales forecasting report 15-20% ROI lifts.